Exploring Apache’s Hadoop MapReduce Tutorial

In the last post, I described the straightforward process of setting up and Ubuntu VM in which to run Hadoop. Once you can successfully run the Hadoop MapReduce example in the MapReduce Tutorial, you may be interested in examining the source code using an IDE like Eclipse. To do so, install eclipse:

sudo apt-get install eclipse-platform

Some common Eclipse settings to adjust:

- Show line numbers (Window > Preferences > General > Editors > Text Editors > Show Line Numbers

- To make Eclipse use spaces instead of tabs (or vice versa), see this StackOverflow question.

- To auto-remove trailing whitespace in Eclipse, see this StackOverflow question.

To generate an Eclipse project for the Hadoop source code, the src/BUILDING.txt file lists these steps (which we cannot yet run):

cd ~/hadoop-2.7.4/src/hadoop-maven-pluggins mvn install cd .. mvn eclipse:eclipse -DskipTests

To be able to run these commands, we need to install the packages required for building Hadoop. They are also listed in the src/BUILDING.txt file. For the VM we set up, we do not need to install the packages listed under Oracle JDK 1.7. Instead, run these commands to install Maven, native libraries, and ProtocolBuffer:

sudo apt-get -y install maven sudo apt-get -y install build-essential autoconf automake libtool cmake zlib1g-dev pkg-config libssl-dev sudo apt-get -y install libprotobuf-dev protobuf-compiler

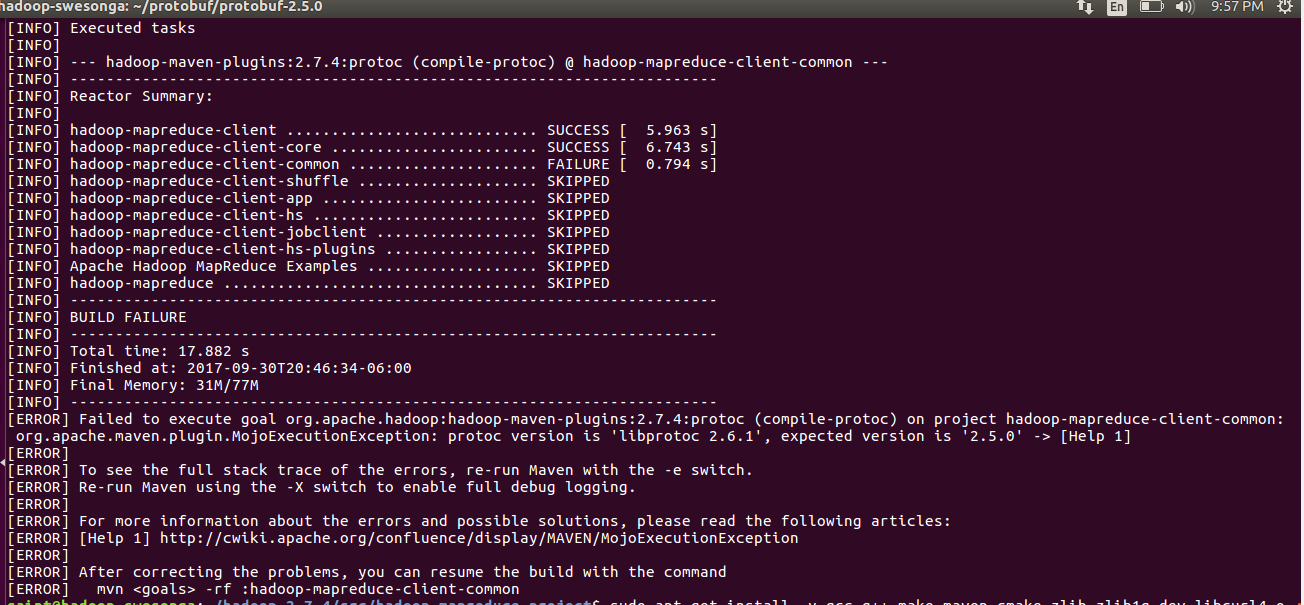

Now here’s where things get interesting. The last command installs version 2.6.1 of the ProtocolBuffer. The src/BUILDING.txt file states that version 2.5.0 is required. Turns out they aren’t kidding – if you try generating the Eclipse project using version 2.6.1 (or some non 2.5.0 version), you’ll get an error similar to this one:

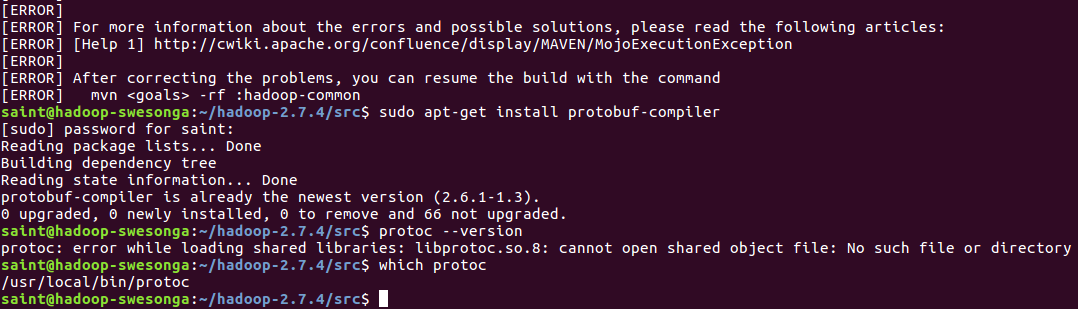

As suggested here and here, you can check the version by typing:

protoc --version

How do we install 2.5.0? Turns out we have to build ProtocolBuffer 2.5.0 from the source code ourselves but we need to grab the sources from Github now (unlike those now outdated instructions): https://github.com/google/protobuf/releases/tag/v2.5.0

mkdir ~/protobuf cd ~/protobuf wget https://github.com/google/protobuf/releases/download/v2.5.0/protobuf-2.5.0.tar.gz tar xvzf protobuf-2.5.0.tar.gz cd protobuf-2.5.0

Now follow the instructions in the README.txt file to build the source code.

./configure --prefix=/usr make make check sudo make install protoc --version

The output from the last command should now be “libprotoc 2.5.0“. Note: you most likely need to pass the –prefix option to ./configure to avoid errors like the one below.

Now we can finally generate the Eclipse project files for the Hadoop sources.

cd ~/hadoop-2.7.4/src/hadoop-maven-plugins mvn install cd .. mvn eclipse:eclipse -DskipTests

Once project-file generation is complete:

- Type eclipse to launch the IDE.

- Go to the File > Import… menu option.

- Select the Existing Projects into Workspace option under General.

- Browse to the ~/hadoop-2.7.4/src folder in the Select root directory: input. A list of the projects in the src folder should be displayed.

- Click Finish to import the projects.

You should now be able to navigate to the WordCount.java file and inspect the various Hadoop classes.

Leave a Reply